“If you’re wondering whether it feels a little weird to have had someone you don’t clearly remember being make potentially life-altering decisions about you, the answer is yes.”

0. CHANGE IS GONNA DO ME GOOD

Things change.

Ain’t nothin’ like it once was.

Ain’t a goddamn thing.

— Litany of Pastor Manul Laphroaig

These periods of radio silence happen now and again.

Usually they coincide with what I euphemistically call “being underwater,” a hat tip to what sometimes happens with mortgages. When the accumulated debts of sleep, focus, commitment, emotional energy, cognition, and so on balloon to where there doesn’t seem to be any way out from under it all. Life, as the saying goes, gets away from you sometimes.

This is a story about that, and a little about what happened before, but mostly about what happened next.

I don’t do the whole “content note” thing, usually. That I am should tell you something in and of itself. Following Peter Wolfendale‘s notation: Suicide (§0,2-4), Drugs (§3), Mental Health (§*), Memes (§0,3,5), Anti-Semitic Poets (but no actual anti-Semitism) (§1,3,5), Computer Science (§0,2-3,5-6), Game Theory (§3,4), Cognitive Science (§5), Metaphysics (§5), References I Don’t Expect Anyone To Get (§*). I am not currently at risk of self-harm, but I am going to be very, very blunt about a comparatively recent time when I was.

For further context, or perhaps to set the mood, here’s something I wrote while surfacing from a previous span of time underwater, on Ello back when it was still a contender for Social Networks You Remember Hearing About:

Back in late November [of 2014], with the encouragement of @quinn and reddit, I started seeing an EMDR therapist (professionally, not romantically). That’s right around the time I went mostly-dark here. These are probably connected, though not in a bad way.

The theory behind EMDR, as I understand it, is that when something traumatic happens to you, your brain continues to take in all the sensory input that it normally would, but you’re a little busy being traumatised at the time and don’t have the cognitive resources to resolve all that input into coherent memories. Instead, the most salient details get dumped out to disk (as it were), tightly coupled to the PAIN FEAR OTHER HORRIBLE THINGS of the event itself but not to the rest of your memory. If something you experience later reminds you of some of those salient details, you can end up “stuck” in that cluster of fragmented memories, perhaps reliving aspects of the past event, or in my case, sinking into a paralytic sort of despair.

I model my own memory as an N-dimensional graph that I can observe up to a k-dimensional slice of at any given time, where k is in practice somewhere around 6. This fits in neatly with the notion of memories-as-clusters, because in graph theory we have a name for a bunch of points that are tightly coupled to each other but only loosely coupled to the rest of the graph; it’s called a clique. Imagine that you’re a hamster in a Habitrail and you’re too chubby to turn around or walk backward. Most of the little plastic bubbles have just a few tubes connecting them to other parts of the Habitrail, but one group of bubbles has a lot of tubes that only connect to other bubbles in the group, and fewer tubes leading to bubbles elsewhere in the Habitrail. If you scamper into one of the bubbles in this set, it only takes a few steps to lose your way in the maze, and your chances of exiting into the rest of the Habitrail are a lot lower. You’ll make it out eventually, but not without stumbling into a lot of the other bubbles in the clique first.

So, to keep this from happening, you want to reorganise the Habitrail so that even if the hamster does end up in one of the bubbles that’s currently in the clique, it can find its way to another region of the Habitrail just as easily as if it were in one of the non-clique bubbles. How this happens from deciding what takeaway you would rather have had from your painful memories, then free-associating about their details while using your eyes to track a moving object, is a wide-open question. @quinn thinks it’s essentially a biological 0day in some mammalian last common ancestor of ours, which amuses me enormously, and as a side note, if there isn’t a name for a cognitive bias in favour of amusing things, there really should be.

What it feels like from the inside, though, is going back through a bunch of prerecorded raw footage and changing the camera angle after the fact. I say this because that was the first common change I noticed among the memories I explored with my therapist. My experience of them during the moving-object-and-free-association phase was like playing a first-person shooter or reading a story written in deeply immersive first person, like The Catcher in the Rye. Afterward, it was more like watching them from a perspective in the ceiling or somewhere above and behind one of my shoulders, and correspondingly far less intense. All the detail is still there; it just doesn’t hurt so goddamn much anymore.

Far and away the best side effect of the whole thing is that I have a lot of my executive function back. I’m not quite sure how that happened either, but I’m not complaining and neither is @thequux. He noticed a marked increase in my Getting Shit Done ability the day after my first appointment, and while the gradient of the improvement was never quite so steep after that, things continued to improve over the course of the next two months. I had one appointment a week for the first four weeks, then we moved to every two weeks. By the sixth appointment we had run out of things to talk about, so we decided to call it done as of mid-January. Function paralysis is still my hobgoblin, but it’s the “donkey between two equally sized piles of hay” kind of function paralysis that I’ve been arguing with all my life, rather than the “everything is terrible and nothing matters” kind of function paralysis that I’ve been trying to get rid of since 2011, and now I have more cognitive resources with which to work on the former. This for six appointments at sixty bucks a pop. Take that, psychoanalysis.

The tools of wordsmithing no longer feel as comfortable in my hands as they did when I wrote that, or when I wrote this piece as much for my future self as for its nominal recipient. (Thanks, past me!) But, solvitur ambulando, getting comfortable with them again means picking them up and hacking at it. So it goes.

Whatever doesn’t kill me makes me something, anyway.

1. MACHINIC UNCONSCIOUS

There is a sense in which “being underwater” is a remarkably descriptive phrase for it.

I have friends who occasionally have to deal with selective mutism in certain circumstances. From their descriptions, it sounds almost like being frozen inside a shell of glass: they know the idea they want to convey, they might even know exactly how they want to phrase it, but getting the actual words out is where the system locks up.

Being underwater is different. You can move; you can see and hear things above the water, even if rippled and distorted by the motion of the medium. You can react to them in nearly any way you like, though the same distortions will garble your responses. You are not a fish; you know you are underwater, even if being there doesn’t trigger your drowning reflex. But you cannot break the surface, any more than the mute can break the glass.

After a while, the routine becomes predictable: drift awake, fail to do anything that registers with the world above the surface, maybe eat something, drift back off to sleep again. “But that sounds like depression.” Exactly. Exactly. If it were stressful, you’d be waving as you drowned. Whatever part of your brain it is that keeps you underwater, it does so to cushion you from the chaos and din above, all the things you’d have to confront at once if you came up for air or to communicate. It’s only forestalling the inevitable, of course, but this is near mode brain’s domain, and planning is not its strong suit. The derealisation is only it trying to help, bless its shortsighted little heart.

The catch-22 of near mode’s help is that it precludes other forms. “What would make you feel better?” is really hard to answer when you honestly can’t think of anything that would, even after giving it your best effort. Besides, does it really count as feeling bad if nothing you’re experiencing internally seems to register as a feeling at all?

There are three conditions which often look alike

Yet differ completely, flourish in the same hedgerow:

Attachment to self and to things and to persons, detachment

From self and from things and from persons; and, growing between them, indifference

Which resembles the others as death resembles life,

Being between two lives — unflowering, between

The live and the dead nettle.— T.S. Eliot, “Little Gidding,” canto III

Nevertheless, the needs of the body intrude in their mechanical way once they become urgent enough, eat-drink-smoke-shit-sleep. It’s not that there’s a desire to die; that would imply some desire existing at all. There is simply no desire to live, no action motivated by anything other than the instinctive urge to retreat from acute discomfort. When it’s only (“only”) a chronic absence of comfort, you float, neutrally buoyant, near the bottom.

There are other names for this sort of thing, of course: burnout, anhedonia, athymia, akrasia. But burnout implies fire and heat leaving behind a charred-out shell, and what I’m talking about is a colder, slower process: sinking farther and farther into murky, unthinking depths as your joints quietly oxidise to stiffness. The others, morphologically, are all propositional negations, defining the thing in terms of what it is not. There are a lot of ways to describe what is missing. It is harder to describe what is left.

2. PARANOID MATHEMATICIAN

I never really got over it after Len died.

I should have gotten back on antidepressants after moving to Brussels. I’d been on a good-enough combination (bupropion and sertraline) the last few months in Leuven, but the move meant changing doctors and it took a while to find a good one and executive function was in short supply and between one thing and another I neglected to get that started back up again. Life was, after all, unstoppably going on, with conference talks and papers and a visa to get sorted out and new relationships and a new job and a brand new academic workshop and whatnot, all of which was evidence of at least some sort of functioning, right? Right?

Fast forward a few years. I remarried in summer 2016, after a four-and-a-half-year live-in relationship; we’d talked about tying the knot in 2015, but delayed for a year both out of logistical convenience for our families and wanting to make sure it was the right decision. In retrospect, the cracks in the foundations were already beginning to show by late 2016; by spring of 2017, things were undeniably unstable, and we started couples therapy. The situation deteriorated anyway, by mid-August it was over, and in October he moved out. So much for making sure it was the right decision, I guess.

That may be an unusually terse description, but, well, we live in unusual times. I’d seen breakups weaponised before, of course, but before this I’d never had anyone offer to weaponise a breakup for me, which is a hell of a thing to have to confront when breaking (har!) the bad news to a friend. (Now you have two problems.) Righteous indignation is a hell of a drug, and I imagine that for many people in bad breakups, such offers might be quite comforting. In a sense, reputation damage is just a new species in the “want me to fuck him up for you?” genus of reassurance memes. Problem is, this only really helps if the recipient of the offer finds it reassuring. After a few iterations of this, I got more cautious about who I talked with, and started to wonder how many of the “we’re separating, but on good terms” announcements I’d seen in the past year or so were honest about the second part. I wasn’t willing to put a smiley face on it and pretend it was amicable, though; and whereof one cannot speak, thereof must one be silent.

(We’re gonna talk about some of the philosophical dick moves that went down, though. But not yet.)

Instead, I went on the road. The four memorials that ended up being scheduled for Len — in Houston and San Francisco, and at DEFCON and CCC Camp — had turned into a sort of Grief World Tour, which actually sounds cynically awful when you put it like that, but cynicism is a perfectly valid coping mechanism and it was a template for something to do at the time. Besides, I didn’t want to be around for the move-out. So I scheduled some meetings, booked some flights, made arrangements to stay with friends in various places, and flew to the States on the fifteenth of October. Somewhere in there I accepted an invitation to keynote the Ethereum Classic summit, which with my return ticket to Belgium already bought meant flying home, spending the night there, then heading right back to the airport the next day for a week in Hong Kong. After that my folks talked me into coming to Texas for Thanksgiving in spite of the circumstances, which I tacked some other work and personal travel onto, and at the end of it all, what was originally supposed to have been a three-and-a-half-week-long trip ballooned into seven.

As I came to describe it afterward, I need to remember that both distress and eustress are still stress.

I got home the second week of December, and that was when shit got dark.

One of the things my ex had said after it was clear that things were over was that he hoped he hadn’t damaged my ability to trust people. No, I’d replied at the time, only my ability to trust you. What I didn’t remind him of was a conversation he’d been there for:

If there’s only so far you can trust people in the first place, there’s only so far you can fall if they let you down. (Decidable problems are priceless. For everything else there’s heuristics, and when those inevitably fail, there’s MasterCard.) Being on the road, in the company of friends I still trusted as far as I ever do, had kept me distracted from thinking too much about the sting of betrayal that was still raw and ragged-edged. At home, I was alone with my confusion and disappointment, all that remained of the hope I’d invested.

I’d taken a Dutch-language class last fall, which had been the limiting factor in when I could fuck off to parts international — my flight was the day after the final. I didn’t need to take the next course in the sequence, but I’d gone ahead and enrolled anyway, because it was actually pretty fun and it got me out of the house. That, I reckoned, put a cap on how long I could spend away, and after all the extensions, my last flight landed the day before the first day of class. I figured the timing was tight, but that stepping back into a familiar routine would be, well, routine.

I woke up Monday morning and couldn’t convince myself to get dressed.

Low-executive-function days are nothing new for me, of course. It’ll be okay, I told myself. You’re allowed to miss some classes, it won’t be that big a deal, just make it to the next one. I spent my day at my desk in my pajamas and bathrobe. I don’t remember what I did that day, or the next, but then, I don’t remember a lot of last December in the first place.

I do remember that when I woke up that Wednesday it was an act of will even to get out of bed. I didn’t make it to class that day either.

That night, the nonstop suicidal ideation kicked in.

3. MOUNTAINS OF MADNESS

Rumination sounds like a bucolic thing, named as it is after the first chamber of a cow’s stomach. Researchers describe it as “a passive and repetitive thinking process,” a pattern of “recursive self-focused thinking.” Sometimes it’s painfully dull, sometimes cripplingly embarrassing with Fremdscham for one’s past self. (Much as the past is a foreign country, one’s past self is a stranger, no matter how much déjà vu it provokes.) Recursive is a better description than repetitive for how I experience rumination; every thought, before yielding to whatever spawned it, reinvokes itself, though not necessarily with the same parameters. Without some terminating condition to bring the chain to an end and start winding back up the call stack, the stack grows and grows without bound. It’s times like these that shed all-new perspective on concepts like undecidable.

This was something different. One of the scumbaggier parts of my brain had stumbled onto a Plan that would work with materials I had on hand and figured out how to turn it into a general-purpose thought-terminating cliché. Like this:

Except far, far more detailed. Come on, you need to eat something and then down that entire box of bromazepam and fall asleep in the bathtub. You should tell work you’re not going to be very useful today and then down that entire box of bromazepam and fall asleep in the bathtub. This is starting to get really troubling, let’s talk to a close friend about it and then down that entire box of bromazepam and fall asleep in the bathtub. Like that, for thirty-six hours straight. “Could you go somewhere?” one of the friends I decided to talk to asked, around hour 30 or so. “I don’t know where I’d go,” I told him. “May I suggest not the bathtub?” he replied, gamely. I was in my room, texting on my phone; the drugs were in a drawer in the living room, so at least there was that. But man does not live in bed alone; you have to get up and take a piss eventually, and where there’s a bathroom, there’s enough liquid to drown yourself by.

Here, said she,

Is your card, the drowned Phoenician Sailor,

(Those are pearls that were his eyes. Look!)

Here is Belladonna, the Lady of the Rocks,

The lady of situations.

Here is the man with three staves, and here the Wheel,

And here is the one-eyed merchant, and this card,

Which is blank, is something he carries on his back,

Which I am forbidden to see. I do not find

The Hanged Man. Fear death by water.–T. S. Eliot, “The Waste Land”, canto I

This is the part I remember least well, writing this some four months after the fact. I remember how I explained it to people during it and immediately afterward; the phrase epistemic crisis came up more than once. My quotidian belief system, whatever system I’ve cobbled together to make sense of my day-to-day environment, was struggling to accommodate the new status quo — and failing at it. Badly. At the time, though, the entire experience was nowhere remotely near that verbal. No, this was other senses firing distress signals. I slept when I could, cried when I couldn’t, and willed myself not to do anything I couldn’t take back, despite what the thought-terminating clichés said. Roughly 36 hours in, both proprioception and my vestibular sense cut out simultaneously. Fortunately, I was still in bed when this happened, so I didn’t fall or anything, but I have to say, the sense of simultaneously not knowing where one is in space and not knowing what direction is up is not one I want to repeat.

At this point I decided that things were Clearly Not Getting Any Better and it was time to upgrade “call for help” to “call for professional help.” But, again, nowhere near that verbally. Well, except for the typing part.

There’s a cultural distinction I have to make here, although I can’t do it alone. Not anymore, anyway. Ever have a belief or an expectation so thoroughly overturned that it’s hard to remember what it was like to have it? Like that, except that other people’s accounts of what to expect are still around to refer back to. I have a decent number of friends in the States who have gone inpatient for one reason or another, some voluntary, some not. The experiences they’ve described to me have been pretty similar to what Scott and Freddie talk about: good luck getting intake to listen, and if by some chance you do get them to listen to you and admit you, good luck getting anyone to listen to you afterward, or treat you as anything other than an object to be dealt with. Being a mental health patient, in the US system, entails a state of moral patienthood as well; you can have agency or care, but not both. You have to be in a really bad place for an American mental hospital to be the better alternative to whatever you’re going through. But, well, I was there.

I had some hints, going in, that Belgium is different. For starters, I have this really good friend, Daan. He’s one of the first friends I made in Belgium, and he’s been through the inpatient system here before — once involuntarily, before I met him, and the second time voluntarily. He didn’t have much good to say about the first time (though I can’t blame anyone for objecting to a forcible Haldol injection), but the second time genuinely did help. Then there was the time another friend of mine who was crashing at my place went off his meds, had a psychotic episode, wandered off, punched a cop, and all that happened was that the police took him to a hospital to wait for his girlfriend to come and get him. (Imagine that happening in, say, Los Angeles.) So there was that. But you never know, do you? Not until you go and find out for yourself.

As luck had it, Daan was online. It was quarter to one in the morning. I told him what was happening, and he promised he wouldn’t call emergency services as long as I kept answering. (In retrospect, this is about the most game-theoretically optimal move I can think of in that kind of situation. Good call, Daan.) It took some searching — the kind it helps to have a native speaker around for — but within half an hour we found a nearby university hospital with a better plan than Scumbag Brain’s. If I could hold on until 8 in the morning, that was when their urgent psychiatric consultation hours started, and if not, I could come to the ER and wait there. Daan asked whether I’d held on to any of the quetiapine that a previous houseguest had mistakenly left, and suggested 25mg as likely to shut Scumbag Brain up long enough to let me sleep without knocking me out for the entire day. I had, so I set multiple alarms, followed his advice, and did in fact get some sleep. When the alarms went off at 7 and 7:30, Scumbag Brain was back at it.

Somehow I motored myself through getting dressed, putting a change of clothes and some toiletries and my e-reader and a USB charger into my backpack, and calling a taxi. I texted my girlfriend in Berlin to tell her where I was going. She texted back that if it got to be 11 a.m. without any further information, she’d assume they’d admitted me and plan accordingly, and reminded me to list her as a visitor if they did.

At the hospital it was about a half-hour wait to see the doctor doing intake that day, an older woman who turned out to be the department head. I have no idea how coherent anything I told her was. I know I mentioned being dumped, the unrelenting intrusive thoughts, how nothing felt real but I still knew that death would be very real indeed. But the how of it is buried under my still-palpable surprise at her response:

“What do you think would be the best thing to do?”

“I think it would be best if I were admitted,” I told her.

She nodded and said, “I think so, too.”

She had my medical records up on her workstation, and we talked about pharmaceuticals. “I’d like to restart you on the bupropion, but for an SSRI, have you heard of escitalopram?”

My nose wrinkled. “That’s one of the isomers of citalopram, right? I was on that one back in grad school, but I hated the side effects.”

Again, not the response my US-conditioned reflexes were expecting: “But sertraline was all right?” I nodded. “Then that’s fine. I’m also going to put down an optional 10mg of etumine, in case the intrusive thoughts get to be too much. It’s an atypical antipsychotic. If you decide you need it, you can just ask at the nurses’ station, okay?” Gentle Reader, I have seen patient-controlled analgesia before, but this was the first time I’ve ever encountered patient-controlled antipsychotics.

I don’t think I can emphasise enough how novel this kind of approach is to someone accustomed to the shut-up-and-take-your-medicine dynamic of the American medical system. The incentives are simply that different. In the US, every patient is distinctive on two different axes: condition and insurance coverage. Since insurance is what pays providers’ salaries, and every insurance plan negotiates different rates with providers, it can be very difficult to get providers to understand that your condition is what you are actually there about. It’s a principal-agent problem; the patient is the principal, but the insurer is their agent, and because the agent has all the control over pricing negotiations with the provider, the principal (i.e., you) runs all the risks of the moral hazard (i.e., not getting the care you need for your problem). In Belgium, there’s a standard tariff that all insurers and providers have to adhere to, which means that to providers, patients are special and unique snowflakes along only one axis: what’s wrong with them. When that’s your starting point, suddenly it starts making sense to treat people according to their individual concerns, rather than as interchangeable instances of some pathological category. Whoda thunk?

I also lost count of how many people reminded me, the first day I was there, that I was there voluntarily and that if I wanted to, I could leave any time. Definitely the intake psychiatrist, as well as the psychiatrist they assigned to me, but also a couple of nurses, unprompted. I don’t know whether I was giving people suspicious looks, if that’s just standard practice there, or what, but enough people said it that I eventually relaxed and believed them. I asked about visitor policies and found out that there weren’t any — whoever wanted to visit could just turn up during visiting hours, no prior arrangements required. I kept my phone, my e-cig, and my e-reader, and when my girlfriend arrived, she brought more clothes, my laptop, and my violin. (I’m trying to imagine how an American psych ward would respond to somebody wanting their violin. “You want WIRES? What do you think you’re going to DO with them?” Uh, practice scales? Be glad I don’t play the cello, or that she left my bass at home.)

I did need the antipsychotics, the first couple of nights. Scumbag Brain was still trying its best; during the day I was scattered and drowsy, but in bed, my thoughts raced unbidden down all the same feculent ratholes I’d come to the hospital to escape, until I D2-blockaded them off for the night. After a few days of that, things got weird: I still couldn’t sleep, but instead of trying to kill me, the racing thoughts were helpful, apart from the fact that insomnia never helps. I brought this up to my psychiatrist, along with the lack of appetite that had kicked in after tapering up my sertraline dose, and after another round of Dorking Out About Pharmacodynamics, we decided to try adding a bedtime dose of an atypical antidepressant whose side effects include drowsiness and hunger. With sleep, appetite, and mood mostly stabilised, I finally had the cycles to devote to resolving the epistemic crisis.

(Also notable: nobody seemed to think it was unusual for a patient to have questions about things like biological half-lives or receptor binding affinities. I’ve had American doctors react to that as if I’d challenged them to a dick-measuring contest that they were afraid of losing.)

As I put it to people after I left: all told, the hospital was a safe environment in which I was able to experiment with psych meds under expert advice for as long as I felt it was necessary, but nothing was forced on me. I never thought anyone would say this of a psych ward, but it was honestly one of the most autonomy-respecting experiences I’ve ever had.

4. DISSOCIATED KNOWLEDGE

That previous section took a long time to write. “It was difficult,” you might say. But not “difficult” in the sense of harrowing or exhausting. More like difficult to access. I know, retrospectively, that these things happened. I have logs for many of them. I know that they happened to a person who I was, but it is difficult to remember being that person, and therefore difficult to write about what it was like to be her. I know that she packed a backpack, for example, because of the details and omissions involved in the facts of it; I eventually brought that backpack home, along with the things my girlfriend and other friends brought me, and I still have the toothbrush the hospital gave me because past-Meredith forgot to pack one. But I can’t remember doing any of the packing.

If you’re wondering whether it feels a little weird to have had someone you don’t clearly remember being make potentially life-altering decisions about you, the answer is yes.

Nor is this the first time that’s happened. In fact, the previous time, it was even more life-altering, but I’ve had more time to get used to the side effects.

Len’s suicide plunged me into an emotional and ethical dilemma. On the one hand, I was unspeakably angry, but on the other, allowing any of that anger to settle on my memories of him brought on overwhelming waves of guilt over being unfair. I circled that Gordian knot for months, searching for a place to slice it that wouldn’t leave me hating either him or myself for the rest of my life. The eternalist stance of “everything happens for Some Higher Purpose” only works as long as you can get past the problem of theodicy, which I can’t. But I wasn’t willing to punt to nihilism, either; when something matters, I can’t pretend that it doesn’t. Things do happen for reasons, and sometimes those reasons are terrible. Sweep them under the rug, and they’re only going to come crawling out when it’s least convenient.

At last I figured out how to thread the needle, and it only meant having to tear down my entire value system and rebuild it on a fresh foundation. This sounds like a lot of effort, but how many QALYs does “spending the next forty years consumed by hatred” come out to? Unless you’re a lot more present-biased than I am, the math is pretty obvious. And that’s how I ended up with universal autonomy as a terminal value. Not just my autonomy, everyone’s. I don’t have to like the ends that people pursue with their autonomy, but the fact that someone might choose an end for themselves that I wouldn’t choose for myself or them attests to exactly how real their autonomy is. Where suicide is concerned, “end” is uncomfortably literal, but my comfort really doesn’t factor into it. (Pieter Hintjens was, of course, especially supererogatory in that regard. But I digress.)

What was especially interesting about this was that it opened up a lot more productive directions not just to think in, but for the emotions to go. If for no other reason than pragmatism, it just isn’t that useful to be angry at a dead person. How are you ever going to resolve it? Being angry on their behalf, on the other hand, is all kinds of fuel. Look at the situation through the autonomy lens. Certainly there were a lot of path-dependent reasons that fed into Len’s secrecy about his seizures and mental health issues, and I even learned the details of some of them. But I refuse to be fully deterministic about it; we are talking about free will here, after all, so determinism is an absurd position to take in the first place. I also refuse to passively accept some of those reasons, like mental health stigma, and not to put too fine a point on it, coming up seven years on from The Event, that refusal has more than a little to do with why I’m still here today.

Or spiral back outward, past the second link in this article: look at Mark Fisher’s life through the same lens. He did a lot of that for us while he was alive, conveniently; I didn’t know him then, so his writing — about mental health, about precarity, about all of it — is how I’m getting to know him now. Capitalist Realism engages with it directly, as do significant amounts of his blog; there’s a lot for me to digest still, but I’m confident there’s more there there, whatever it ends up being.

You can imagine there’s a lot of cathexis bound up in this, of course. You’d also be right. Which was why having it weaponised against me, from completely unexpected quarters, was such a tactical nuclear manoeuvre.

My ex and I had made a variety of commitments to one another, some explicit, some implicit. Our marriage vows were an explicit one; giving each other shell accounts, and then sudo capability, on our respective laptops as the relationship deepened were somewhat more implicit ones. I will take care of this, do right by it. I could have looked at his porn collection any time I liked; Gentle Reader, I am more content when people feel secure in their privacy, and I never once did.

(There is perhaps an entire essay to be written on the emergent social ritual of deleting one’s ex’s shell account, but this is not that essay.)

In any case, I’m dancing around with preliminaries as I flinch back from engaging with the content of the epistemic crisis. On with it.

When you get down to the very bottom of it, the fault at the core of the betrayal was bad data. Let’s set aside, for the purposes of this discussion, what process generated and sent the bad data, and just look at the receiver side. The most salient feature of the data at the time was that it looked genuine. Stop me if you’ve heard this story before.

Over time, however, there grew to be a disconnect, a divergence, between the affect of the data and the content of the data. The protestations of loyalty became more and more fervent as the evidence of other priorities grew more and more undeniable. “Your mouth says one thing, but your revealed preferences say another.”

Social engineers will tell you that the most effective way to convince someone else of a falsehood is to actually believe it yourself. This is the same principle behind Method acting. Without going into too much detail, let’s just say it works, right up until it doesn’t anymore. There are probably multiple ways for that to happen, but a reliable one is ceasing to believe the lie.

August was when he couldn’t maintain the illusion to himself any longer. He’d been keeping it up, he said, much longer than that — months, at least. Possibly as long as a year.

This was, shall we say, a lot to take in all at once. And in fact I didn’t take it in all at once. Over the weeks that followed, chunks of realisation fell like debris from a deorbiting satellite. Certain plans were no longer tenable; certain goals were that much farther out of reach. The common theme among all of them was “That commitment you thought you could count on? Evaporated.” Burned up on re-entry. Gone.

Steelmanning as hard as I possibly can here, I can see one possible difference in point of view that could lead to such a drastic divergence in expectations: whether one considers a commitment to be an artifact or a process. An artifact is a static entity, fixed into being at the moment of its having become an artifact. A process is a dynamic entity, and it interacts not just with one’s past self, but with one’s present and future selves as well. If commitment is a process, it is a pruning process, a filtering process; one precommits to cutting off certain courses of action that one could, in principle, otherwise take.

On the other hand, if commitment is an artifact, then “I meant that commitment at the time” is a semantically non-null statement. The artifact might once have been a treasure, but now it is trash. If it’s a process, though, that statement bottoms out in vacuity.

I’m dancing with prolegomena again. On with it.

To me, a commitment is an exercise of one’s present autonomy that is binding on the space of choices available to one’s future self. When we make commitments, we choose to limit the combinatoric richness of that option space in favour of some other kind of richness, some other value. So it caught me completely flat-footed when my ex characterised my expecting him to live up to the commitments he’d made in his wedding vows as trying to restrict his autonomy. Wasn’t that supposed to be his lookout when he made them?

It was an irrefutable argument, I’ll give him that. Even now, I can’t really argue with it. People get to do what they want with the courses of their own lives. I just, you know, expected something resembling continuity, resembling consistency. Perhaps that was my mistake in the first place.

The value structure held. As much of a shock as a direct hit on its core premise was, it was designed to take hits like that and keep functioning. Overengineered, you might even say.

What lay underneath it was a different story.

Consider a building. A hardened building, let’s say, resilient to external shocks. Put it on a proper pile foundation, no redneck engineering here. Hit it hard enough, though, and if hydrogeological conditions are right, the soil under it can liquefy. Usually this is an earthquake’s fault, but a sufficiently powerful explosion can do it too. The sand or silt goes quick, anything trapped in it but buoyant rises, whatever rests on it sinks, and the soil goes slow again.

If the building is a value structure, meaning is the ground it rests on. Not the piles (those are part of the building), the ground. What happens when that ground isn’t solid anymore? We might also use another metaphor and say that meaning is upstream from value. What happens downstream when the river shifts course somewhere upstream?

Whatever your answers to that are — that’s what I mean when I say epistemic crisis. I had no solid ground, no charted course, no basis from which to apprehend what anything meant, for a while there. “Surprise, the last N months of your life were fake news!” AAAAAAAAAAAHHHHHHH.

How people behave in a crisis is, not to put too fine a point on it, weird. Rationality typically doesn’t enter into it, unless it’s the instrumental rationality of having trained a response for a particular kind of crisis (e.g., active shooter firearms drills), and even then the bulk of the reasoning went on long beforehand. During the Incident itself, the elephant has control, not the rider.

Where this gets complicated is when the elephant is in a position to make binding commitments on its future self — which includes not just the elephant, but the rider. Inpatient psychiatric care is of course one such binding commitment, but then again, so is suicide. “Diminished capacity” is such a euphemistic way of describing it, even if it’s true that I was operating at maybe 60% of my usual cognitive ability when I checked out of the hospital and am probably back around 85-90% now. It’s a paradox: if you know (in some sense) that you’re operating at diminished capacity, how do you know whether you’re competent to evaluate the course of action you’re considering? Or, put another way, if you’re not actually in control of your rational faculties, can you actually be said to be acting autonomously?

I didn’t have good answers to those questions then, or if I did, I don’t remember them. I don’t have good answers now, either. The best I have is a first approximation that meets them with another question: do you want to continue to be a process, or not? For some people, either the desire to live or the fear of death are strong enough to risk a period of captivity, whether imposed by oneself or by third parties. Others, for whatever reasons (and oh, there are many), would rather halt than take that chance. I don’t get to choose for anyone else, but I do get to decide for me.

5. ALFRED NORTH WHITEHEAD

The temporal logic of actions. Communicating sequential processes.

Something there is that doesn’t love static analysis.

It has been remarked that a system of philosophy is never refuted; it is only abandoned. The reason is that logical contradictions, except as temporary slips of the mind — plentiful, though temporary — are the most gratuitous of errors; and usually they are trivial. Thus, after criticism, systems do not exhibit mere illogicalities. They suffer from inadequacy and incoherence.

— Process and Reality, p. 6

Not to go all strong-form-of-the-Sapir-Whorf-hypothesis on you or anything, but Steve Yegge has a hell of a point about object-oriented programming and nouns. We laugh at satire like FizzBuzzEnterpriseEdition, for values of “we” that include anyone who’s ever had to deal with Spring or Struts, because Java’s relentless fixity on things lends itself to self-parody, but also because we’ve seen what happens when languages with no first-class functions have to deal with passing functions as arguments anyway.

Alan Kay takes it even farther, saying that he regrets having used the term object to refer to values in Smalltalk in the first place, “because it gets many people to focus on the lesser idea.” But we’ll get back to that.

Cumbersome epistemology and equally cumbersome grammar aren’t the only things that can make object-oriented code difficult to reason about. Hidden state is much, much worse. The principle of abstraction — hiding implementation details of library or framework code from the people who use it — has great intentions when it comes to reducing the cognitive burden on developers, but only delivers on them insofar as the implementation isn’t terrible. (Hands up everyone who’s had to deal with somebody else’s handrolled ORM. Or somebody else’s O(n³) sort. Or somebody else’s buggy comparator. Right, that should be enough; if your hand didn’t go up, find someone whose did and get them to explain it to you.) Maintaining an OO codebase over time (in my experience, particularly in an enterprise environment; your mileage may vary) requires eternal vigilance against scope creep, lest one class of object try to become God or leaky abstractions dissolve your architecture into a big ball of mud.

The functional paradigm opposes the object-oriented paradigm in both regards: immutable records rather than mutable objects mean never having to reason about “but what if the value of that field changes?”, and if anything, functional languages privilege verbs (i.e., functions) over nouns (it is right there in the name, after all). Mysteriously, though, there is fixity in the Functional Kingdoms as well; immutability is one aspect of it, and another is static typing. (Meanwhile, Alan Kay drifts overhead in a hot-air balloon, shouting into a megaphone about message passing. But we’re not quite there yet.)

Types help both us and compilers reason about what functions do. A helpful compiler will tell you when it doesn’t make sense to do some function to a particular thing, and why. An unhelpful compiler (looking at you, every single C) will look the other way as you cast puppies through a void pointer into wood and feed them, yelping, into a woodchipper.

Even more helpful compilers will reason for you about not just what goes into and comes out of functions, but their side effects as well. Monads, say what you will about their other qualities, encapsulate side effects — which is all well and good until you have to compose them. Enter monad transformers, and applicatives, and … wait, why is everything suddenly a noun again? If we’re trying to characterise well-behaved software, is “what is it?” really a better lens than “what does it do?”

Perhaps I’m being unfair to PL theory here. (I am occasionally mistaken for a PL theorist. It comes from a place of love.) Scala and Go have turned traits and structural typing (the idea that a thing is what it can do, checkable at compile time — you can also think of it as static duck typing) into concepts that a randomly selected working programmer has probably heard of. Meanwhile, out on the bleeding edge of type theory, session types and linear dependent types hold promise as tools for characterising the correct operation of entire protocols. This has tremendous implications for security, but after thirteen years in this field, I’ve learned never to close my eyes. The attack always comes from something you forgot — or didn’t bother — to model.

I’ll just cut myself off right here before I go and recapitulate the entire debate between substance metaphysics and process metaphysics through the lens of type theory. (Maybe another time, though.) That said, there’s a historical thread of inquiry here: it starts with set theory, then Russell and this section’s namesake, Whitehead, develop the theory of types to overcome inconsistencies in set theory. Much later on, Per Martin-Löf draws on L. E. J. Brouwer’s intuitionistic mathematics to create intuitionistic type theory, from which the entire ML family of languages springs. Whitehead went in a different, more abstract direction, though. Setting aside the formal language of the Principia, he drew on casual, everyday language,

The common word exact without vulgarity,

— T. S. Eliot, “Little Gidding,” canto V

which he then used rigorously and methodically

The formal word precise but not pedantic,

— ibid

to develop a process-oriented account of existence.

The complete consort dancing together)

— ibid

Instead of entities having primacy, interactions do. Existing becomes a process of interacting with the universe in some way.

(Bubbles break on the surface of the water. Alan Kay circles overhead in his balloon.)

Interaction, as a matter of definition, entails a subject and at least one other subject to interact with, although the other subject can also be the self. (See also: reflexivity, recursion.) “What is the type of one endpoint transducing?” Mu. The question is poorly posed, not only when the other subject is absent, but when the recursion goes too deep and the stack overflows. “But Meredith,” I hear you say, “whence this radical intersubjectivity? Are you really calling even inanimate objects subjects?” Well, yes, actually. As I type on this keyboard, my fingers wear down the texture of the plastic keys; eventually the keys will become smooth and polished from use. At the same time, the plastic wears off microscopic bits of skin from my fingers. This may sound like a semantic quibble, but let’s look at it from the perspective of syntax for a moment. Ditransitive verbs are those which require two objects (a recipient and a theme), such as “lend” or “show”. English does not, to my knowledge, have any disubjective forms, although copular constructions are somewhere in that vicinity. Probably other stative verbs too, if you’re willing to admit prepositional phrases as characterising interaction, which I for one am. So we’re operating at a bit of a disadvantage trying to talk about disubjective interaction in a language that seems grammatically bound and determined to characterise all interactions in terms of subjects and objects, but if there’s one thing I’ve learned to count on all these years, it’s that linguistic robustness finds a way.

But we were talking about stack overflows. We were also talking about bounds on volition, earlier.

Bounds on volition are pretty clearly downstream from bounds on cognition, in my point of view. You can’t willingly do something it never occurs to you to do. Cognition, in turn, is bounded by the physical limits of inference. We can pose and debate the existence of additional bounds, but that one bounds everything. Is this pessimistic of me? Not at all. Like a sucking chest wound, halting is Nature’s way of telling you to slow down, because you will not get any farther no matter how hard you try. Call it computable realism, perhaps, for the pun if nothing else. “Wait, are you saying you agree with the simulation hypothesis?” No. But I am saying that nature computes, that what it can compute is bounded, and that those bounds are guardrails beyond which those questions that can be asked cannot necessarily be answered.

When we’re talking about brains, things get even more complicated, because brains are self-updating processes. Self-modifying code, if you will. Self-modifying hardware, really, although the distinction gets blurry down at the physical layer. Neurons that fire together wire together, and these wirings change in density and strength with repeated firings; old patterns fade away and new patterns emerge in the tapestry over time, the code says something different. Often it interacts with old code in unexpected ways, not that this should surprise anyone. Sometimes it interacts with itself in unexpected ways, not that this should surprise anyone. (And yet it seems like, every time, it does. Including me.)

I’ve gotten pushback on this view of consciousness, usually from people who think I’m treating sapience as deterministic and find that sort of thing distasteful, though occasionally from people who think I’m treating sapience as deterministic, conclude there are cheat codes to manipulating people (and that I’m holding out on them), and generally give the first group cause for their distaste. If anything, I think that both skepticism of the computational view of consciousness, and naive mechanistic interpretations of it, are predicated on a sort of ascetic ideal that even real-world source code almost never achieves in practice, much less people. (This is why I keep saying that langsec is also about usability, but that’s a completely different tangent.) Clean layer separation, simple functional interfaces, fully specified state machines, well-typed outputs — these are all well and good in software, and bad things can happen when we don’t employ them. Still, the underlying hardware matters too, and both the software and the wetware in humans are spaghetti all the way down. Different spaghetti, for everyone, with equally individual lifetimes of experiences. Rephrase the central dogma of genetics into, if you will, the computational dogma of genetics: DNA is unarchived into RNA, which is compiled into the protein machines on which the self-modifying algorithms that are us execute. The worst spaghetti code a human — or any team of humans — has ever produced cannot possibly compare to the explosive mess that is the human metabolic system. We had a chart of it at the office at IDT; sometimes I’d stand there and stare at it, thinking, “This is so ridiculously baroque it could only possibly have evolved.”

This is also why I can’t get on board with the concept of coherent extrapolated volition: it’s Laplace’s demon, but for people. Wetware decision processes are neither pure nor functional, simple as that. They are side-effecty as hell, and trying to treat them otherwise is trying to abstract away implementation details that turn out to be necessary in much the same way that time turns out to be a necessary implementation detail if you need to reason about timing attacks. It’s not the extrapolation part I disagree with, it’s the coherent part. Getting a system to run stably for a short while is easy. Keeping one up longer-term takes a lot more work. You could almost think of computation as an attractor, given how often it crops up by accident, but it’s an unstable one. As Paul Snively likes to say, “information wants to be free, computation wants to diverge.”

(Alan Kay drops a corked bottle from above. It splashes and bobs in the water; a pair of hands breaks the surface, followed by the rest of a figure. It uncorks the bottle, tips out a rolled-up piece of paper, and unrolls it. It reads: “Turns out, we were the real weird machines all along.”)

6. FINAL FORM OF THE STATE

At last: the end. No, an. It’s never over.

At last, an ending that, sometimes, you’re happy

To walk away from. Sometimes, you walk back.

At last: where do we go from here?

It’s always better to have an eventual destination in mind when asking this. At least, better than “anywhere else,” which is a common default in these sorts of situations. But no amount of care with respect to your destination can improve your odds of escaping yourself; as Buckaroo Banzai sagely advised us, “No matter where you go, there you are.”

There’s actually a sense in which not all that much has changed. I still have the same friends, same blog, same hobbies, same apartment (slightly redecorated), same redonkulously loyal and affectionate cat. Same research, (mostly) same colleagues, same workshop. I’ve been on sabbatical from my job since mid-February, because a chief scientist who needs a three-hour nap after reading a twelve-page paper is a chief scientist who needs some time off to finish getting her head back in order, but that’s been going relatively well. In the meantime, I’ve kept busy, shuttling back and forth between colleagues and conferences now that it’s finally goddamn spring. (Nearly summer, now. This piece has been baking for a while.) I also got myself accepted to the DeepSpec summer school on formal verification, with plans to come back to work once that’s over. It’s a bit like LARPing as an unaffiliated early career academic, except with fewer worries about where the next paycheck is coming from and no publish-or-perish pressure.

Crucially, I also still have a brain that, for all its features, still tries to up and murder me every once in a while. The one big difference is that now I have tools for that. Maybe applying them over time will unwire the neurons that forged themselves together in firing, bulldoze and regrade the slippery slope that leads down to suicidal psychosis. Or maybe it won’t, in which case, here’s to better living through chemistry and safety nets that work.

From a pragmatic standpoint, I have to consider the very real possibility that this may not be my only trip through the inpatient system. Events that shake me up so thoroughly that I have to dig all the way down to metaphysical bedrock to eventually make sense of them are few and far between — thankfully — but quod erat demonstrandum, they can happen. Suicidality may follow a kindling model, independent of depressive episodes, or maybe not; the research is not extensive. Perhaps that will change, in the wake of the suicides of Kate Spade and Anthony Bourdain. But those of us enmeshed in evasion games with the black dog don’t have time to wait for the research to tell us what to do; we are made biohackers by circumstance, trying to keep always a step ahead of it. One more cycle. One more tick.

You’d think I’d be angrier, but after all the ping-ponging around Kubler-Ross’ web of grief states — which should never have been called “stages” to begin with — what I’m left with, when I glance in the rearview mirror, is only a sense of profound disappointment. The kind it takes work not to turn into pity as the past gets more distant, at least if one looks back often enough to make that an issue. Easier, then, simply not to look back at all, apart from the necessary safety checks when changing lanes.

There’s no moral to this story, except possibly: something always happens next. But morals answer why?-questions, and this wasn’t a story about why, it was a story about what and some inferences about how. Which is to say, a story about what and a story about a story about what. “Something always happens next” is an answer to “why should I keep going”; it is not necessarily a good answer or a right answer, if every next thing that can happen is too much to bear. It is only a true answer. That doesn’t make it useful.

I can’t provide an answer that will unerringly convince you, or your friend, or your loved one, or the celebrity you admire, to keep going. I’m not even entirely sure how I convinced myself. You’d think, having been through it, that I could account for it. Instead, all I know is that I saw the net, I grabbed at it on the way down, and it caught me. Not everybody has a net under them. Not everybody can see it when it’s there. Not everybody can bring themselves to reach out, even if they see it. Some people who reach out will still slip through the cracks anyway. Survivorship bias is also a hell of a drug, and I can’t promise you that I’m not on it.

That said:

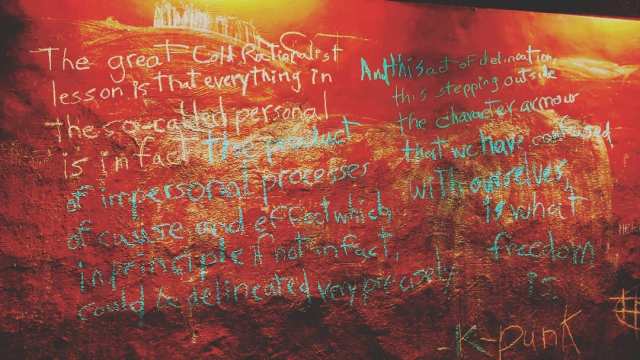

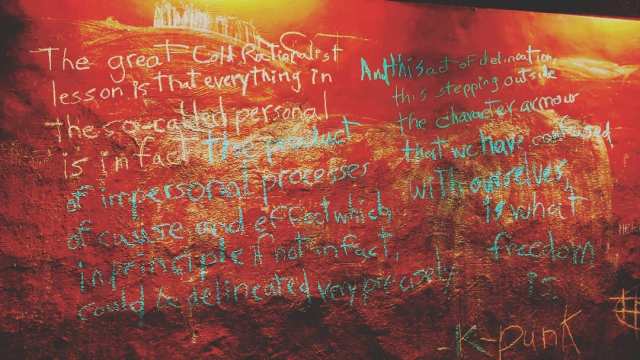

“The great Cold Rationalist lesson is that everything in the so-called personal is in fact the product of impersonal processes of cause and effect which, in principle if not in fact, could be delineated very precisely. And this act of delineation, this stepping outside the character armour that we have confused with ourselves, is what freedom is.” — Mark Fisher, transcribed on my kitchen wall by Giancarlo M. Sandoval while I was in the hospital.

The lines between machine and brain are nebulous, and that’s okay. Not in the shopworn “this is fine” sense; no, really, this is not a threat. It’s a handle.

Just a little more computer science. I promise there won’t be much more.

A handle is an interface to some resource: a file, an output buffer, a network socket, a process. Like the handle of a shovel or an axe, its construction manifests how you interact with it. A handle is opaque if it doesn’t afford you any meaningful interactions — if all you can really do with it is pick it up, put it down, or hand it off to something else. There isn’t really a commonly-agreed on antonym for opaque here, but we might call a handle that gives you recognisable affordances for interacting with it an introspectable handle. A file handle affords you the ability to seek to some point in the file, to read from it, and to write to it. A socket handle affords you the ability to send and receive data over it. A process handle affords you the ability to redirect its output, or to attach some supervening process to it. A debugger, for example.

In an extremely Rube Goldberg but no less real sense, there is a handle I can use to attach a debugger to the process that is me. It involves taxicabs and insurance and the most mockably baroque bureaucracy in western Europe, but it’s there. There aren’t words for how grateful I am for that. The Rube Goldberg machine doesn’t need to hear them — it can’t anyway — but I wish I could figure out how to construct them for other people to hear. To know that it’s possible. I’ve been trying for about ten thousand words now, and the best I can do is hope it helps.

Debugging is the programmer tracing the program, step by step or in leaps and bounds, forward and backward in time. Even when the program is the programmer, this is interaction: self-interaction is still interaction.

But eventually self-interaction gets predictable. And the only way to bring in unpredictability is to go out and interact with things you can’t predict.

Quod erat demonstrandum.

May you never halt. I’ll try not to either.

Believe it or not there are still people active on Ello at this time. Sure it’s mostly become Pinterest for Stylistas with Contests at this moment, but the miscreants & deviants still hang at the odd corner here & there…

That said glad to hear you’re back over water. To continued good health! 🍻

LikeLiked by 1 person

Glad you’re here, take care.

LikeLike

Pingback: Rational Feed – deluks917

wow. thank you.

I’ve read it thrice.

LikeLike

I’d wondered where you have been. So nice to read and contemplate another piece by you.

LikeLike

Really sorry you had to go so far down ‘my’ neck of the mental health road.

And while being mad at your deceased loved one may not seem productive, it is perfectly normal and perfectly confusing. However, that’s reality – even the best people are not perfect and will sometimes disappoint you (hopefully not on such an epic scale with any regularity).

It takes a while to get away from thinking about the bad stuff and being triggered by memory ‘land mines’, but eventually you get to a point where you mostly remember the good stuff and are happy for having had that experience at all instead of hurting over the end of that period in your life.

May the Lord give you the strength to get to that point. Next time you’re in Texas, holler and we’ll hang out or something.

LikeLike

Good to hear you’re in better spirits.

Thanks for the “underwater” metaphor. That’s a good way to encapsulate where my life has been lately, and it gives me another hook to try to debug it. Seem to have found a lot of those lately, that I have – I’d call it a sign if not for knowing that the notion is faintly ridiculous. That aside, just having a name for something makes it tractable, in a sense. And now my flailing has a name. Thanks for that.

We humans really are bizarre, klugy machines, aren’t we?

LikeLike

Pingback: A Sincerely Intimate Delivery from Pete Wolfendale on Psychosomatic Pathologies – SubSense

Fantastic post but I was wondering if you could write a litte

more on this subject? I’d be very thankful if you could elaborate a little bit

more. Kudos!

LikeLike

Great information. Lucky me I found your site

by chance (stumbleupon). I’ve bookmarked it for later!

LikeLike